Welcome to the latest edition of FindBiometrics’ AI update. Here’s the latest big news on the shifting landscape of AI and identity technology:

Google may start developing its tensor processing units, or TPUs, in-house in order to reduce costs, according to a report from The Information. TPUs are designed to facilitate neural network machine learning, and are optimized for Google’s TensorFlow software. The company has been buying them from Broadcom, but has lately been at odds with its supplier over the latter’s pricing.

A government research institute in Abu Dhabi has launched an AI model that is better than Meta’s LLAMA 2 open source model and “on par” with Google’s PALM 2, according to the AI model library Hugging Face. An official with the country’s Advanced Technology Research Council, the agency behind the “Falcon 180B” model, says it plans to launch a state-backed company that will compete with major AI firms like OpenAI later this year.

OpenAI, meanwhile, has launched the latest version of its DALL-E text-to-image generator, DALL-E 3. Its most notable improvement is having figured out how to render text within a generated image, positioning it in competition with Ideogram, a startup that specializes in this skill. OpenAI says it has once again taken measures “to limit DALL-E 3’s ability to generate violent, adult, or hateful content.”

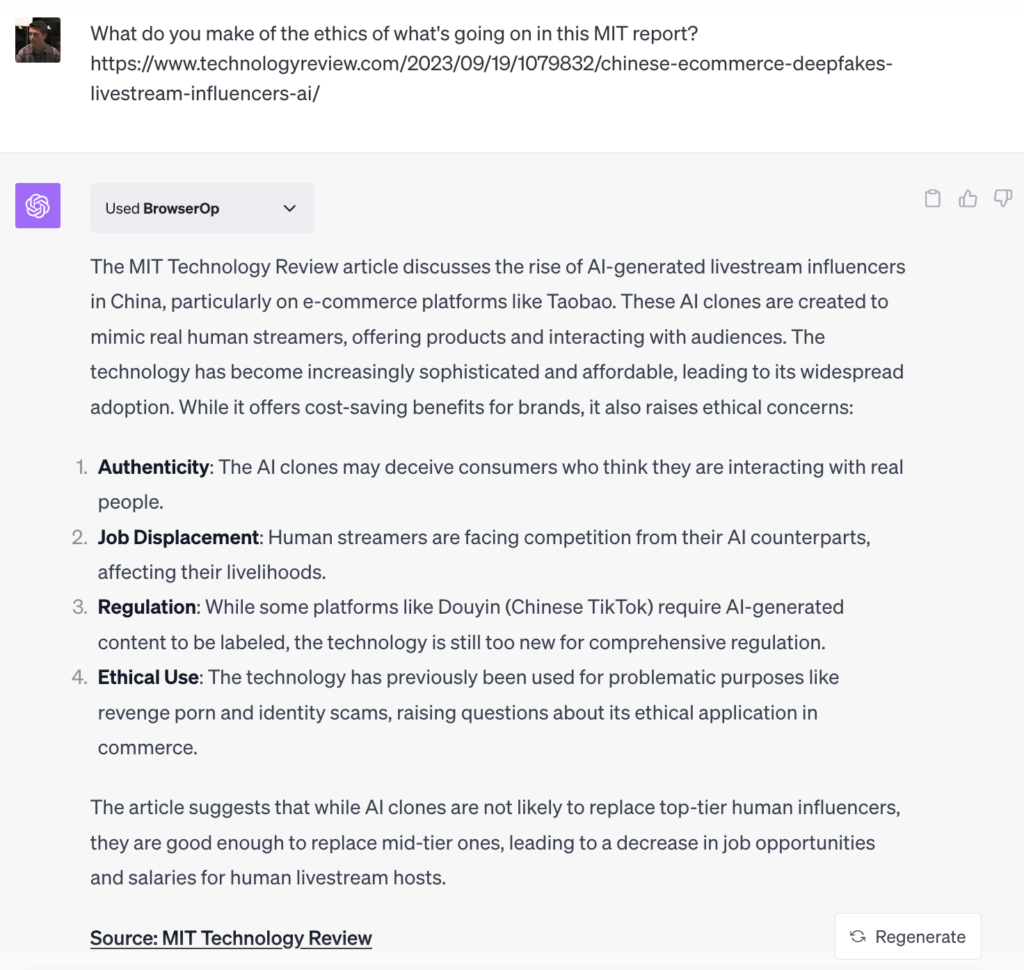

Chinese AI companies are now offering deepfake clones of influencers to market clients’ products. According to MIT Technology Review, a basic clone can be modelled for about $1,100, while more sophisticated replicants can cost several thousand dollars. The deepfake influencers are used to peddle products on platforms like Taobao, the most popular e-commerce site in the country, 24/7.

A proposed bill from Senator Ron Wyden, D-Ore., Senator Cory Booker, D-N.J., and Representative Yvette Clarke, D-N.Y. would require companies to conduct impact assessments for considerations like effectiveness and bias when using AI to make “critical decisions.” The Algorithmic Accountability Act of 2023 would task the Federal Trade Commission with establishing guidelines for such assessments, and mandate it to publish an “annual anonymized aggregate report” on related trends.

The UK government has published a case study on Anekanta AI’s AI Risk Intelligence System, which revolves around the use of a detailed questionnaire for developers and users of “high-risk AI” systems, with a focus on biometric technologies. The system is aimed at standardizing how such technologies are used and understood. Anekanta AI is a private company, and its case study was jointly published by the UK government’s Centre for Data Ethics and Innovation, and the Department for Science, Innovation, and Technology.

The Department of Homeland Security has announced new internal rules for its use of artificial intelligence, including provisions aimed at ensuring that Americans can decline to undergo face scans at airports and in other situations. The rules will also mandate the use of human review of automated biometric matches based on facial recognition, and will require the DHS to periodically audit its facial recognition systems to ensure that they aren’t being misused. In announcing the guidelines, the DHS also named its CIO, Eric Hysen, as its Chief AI Officer.

The chatbot’s take: We asked ChatGPT for its take on the ethics of deepfake marketing influencers.

–

September 22, 2023 – by Alex Perala

Follow Us